什么是 PyTorch?

PyTorch 是一个基于 Python 的科学计算包,主要定位两类人群:

- NumPy 的替代品,可以利用 GPU 的性能进行计算。

- 深度学习研究平台拥有足够的灵活性和速度

开始学习

Tensors (张量)

Tensors 类似于 NumPy 的 ndarrays ,同时 Tensors 可以使用 GPU 进行计算。

from __future__ import print_function

import torch

构造一个5×3矩阵,不初始化。

x = torch.empty(5, 3)

print(x)

输出:

tensor(1.00000e-04 *

[[-0.0000, 0.0000, 1.5135],

[ 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000],

[ 0.0000, 0.0000, 0.0000]])

构造一个随机初始化的矩阵:

x = torch.rand(5, 3)

print(x)

输出:

tensor([[ 0.6291, 0.2581, 0.6414],

[ 0.9739, 0.8243, 0.2276],

[ 0.4184, 0.1815, 0.5131],

[ 0.5533, 0.5440, 0.0718],

[ 0.2908, 0.1850, 0.5297]])

构造一个矩阵全为 0,而且数据类型是 long.

Construct a matrix filled zeros and of dtype long:

x = torch.zeros(5, 3, dtype=torch.long)

print(x)

输出:

tensor([[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0],

[ 0, 0, 0]])

构造一个张量,直接使用数据:

x = torch.tensor([5.5, 3])

print(x)

输出:

tensor([ 5.5000, 3.0000])

创建一个 tensor 基于已经存在的 tensor。

x = x.new_ones(5, 3, dtype=torch.double)

# new_* methods take in sizes

print(x)

x = torch.randn_like(x, dtype=torch.float)

# override dtype!

print(x)

# result has the same size

输出:

tensor([[ 1., 1., 1.],

[ 1., 1., 1.],

[ 1., 1., 1.],

[ 1., 1., 1.],

[ 1., 1., 1.]], dtype=torch.float64)

tensor([[-0.2183, 0.4477, -0.4053],

[ 1.7353, -0.0048, 1.2177],

[-1.1111, 1.0878, 0.9722],

[-0.7771, -0.2174, 0.0412],

[-2.1750, 1.3609, -0.3322]])

获取它的维度信息:

print(x.size())

输出:

torch.Size([5, 3])

注意

torch.Size 是一个元组,所以它支持左右的元组操作。

操作

在接下来的例子中,我们将会看到加法操作。

加法: 方式 1

y = torch.rand(5, 3)

print(x + y)

Out:

tensor([[-0.1859, 1.3970, 0.5236],

[ 2.3854, 0.0707, 2.1970],

[-0.3587, 1.2359, 1.8951],

[-0.1189, -0.1376, 0.4647],

[-1.8968, 2.0164, 0.1092]])

加法: 方式2

print(torch.add(x, y))

Out:

tensor([[-0.1859, 1.3970, 0.5236],

[ 2.3854, 0.0707, 2.1970],

[-0.3587, 1.2359, 1.8951],

[-0.1189, -0.1376, 0.4647],

[-1.8968, 2.0164, 0.1092]])

加法: 提供一个输出 tensor 作为参数

result = torch.empty(5, 3)

torch.add(x, y, out=result)

print(result)

Out:

tensor([[-0.1859, 1.3970, 0.5236],

[ 2.3854, 0.0707, 2.1970],

[-0.3587, 1.2359, 1.8951],

[-0.1189, -0.1376, 0.4647],

[-1.8968, 2.0164, 0.1092]])

加法: in-place

# adds x to y

y.add_(x)

print(y)

Out:

tensor([[-0.1859, 1.3970, 0.5236],

[ 2.3854, 0.0707, 2.1970],

[-0.3587, 1.2359, 1.8951],

[-0.1189, -0.1376, 0.4647],

[-1.8968, 2.0164, 0.1092]])

Note

注意

任何使张量会发生变化的操作都有一个前缀 ‘_’。例如:x.copy_(y), x.t_(), 将会改变 x.

你可以使用标准的 NumPy 类似的索引操作

print(x[:, 1])

Out:

tensor([ 0.4477, -0.0048, 1.0878, -0.2174, 1.3609])

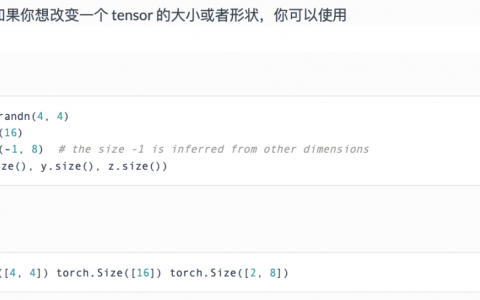

改变大小:如果你想改变一个 tensor 的大小或者形状,你可以使用 torch.view:

x = torch.randn(4, 4)

y = x.view(16)

z = x.view(-1, 8) # the size -1 is inferred from other dimensions

print(x.size(), y.size(), z.size())

Out:

torch.Size([4, 4]) torch.Size([16]) torch.Size([2, 8])

如果你有一个元素 tensor ,使用 .item() 来获得这个 value 。

x = torch.randn(1)

print(x)

print(x.item())

Out:

tensor([ 0.9422])

0.9422121644020081

原创文章,作者:pytorch,如若转载,请注明出处:https://pytorchchina.com/2018/06/25/what-is-pytorch/